The day must come when electricity will be for everyone, as the waters of the rivers and the wind of heaven. It should not merely be supplied, but lavished, that men may use it at their will, as the air they breathe. - Emile Zola, “Travail”, 1901

Abundant electricity is a defining feature of the modern era. At the turn of the 20th century electrical power was a rare, expensive luxury: in 1900 electricity provided less than 5% of industrial power in the US, and as late as 1907 was in only 8% of US homes. Today, however, 89.6% of the world’s population has access to electricity (97.3% if you just consider urban areas), and Wikipedia’s “list of countries by electrification rate” has 123 countries sharing the top spot at 100% electrification.

Electrical service is considered critical in a way that’s different from most other services. Even a brief interruption in electrical power is considered a serious problem in industrialized countries where power outage durations are typically measured in minutes per year. To put this in perspective, the average yearly outage time in the US is around 475 minutes per year, which is considered especially unreliable despite representing ~99.9% uptime. By comparison, Germany averaged just 12.7 minutes of power outages per year in 2021—a remarkable 99.998% uptime.

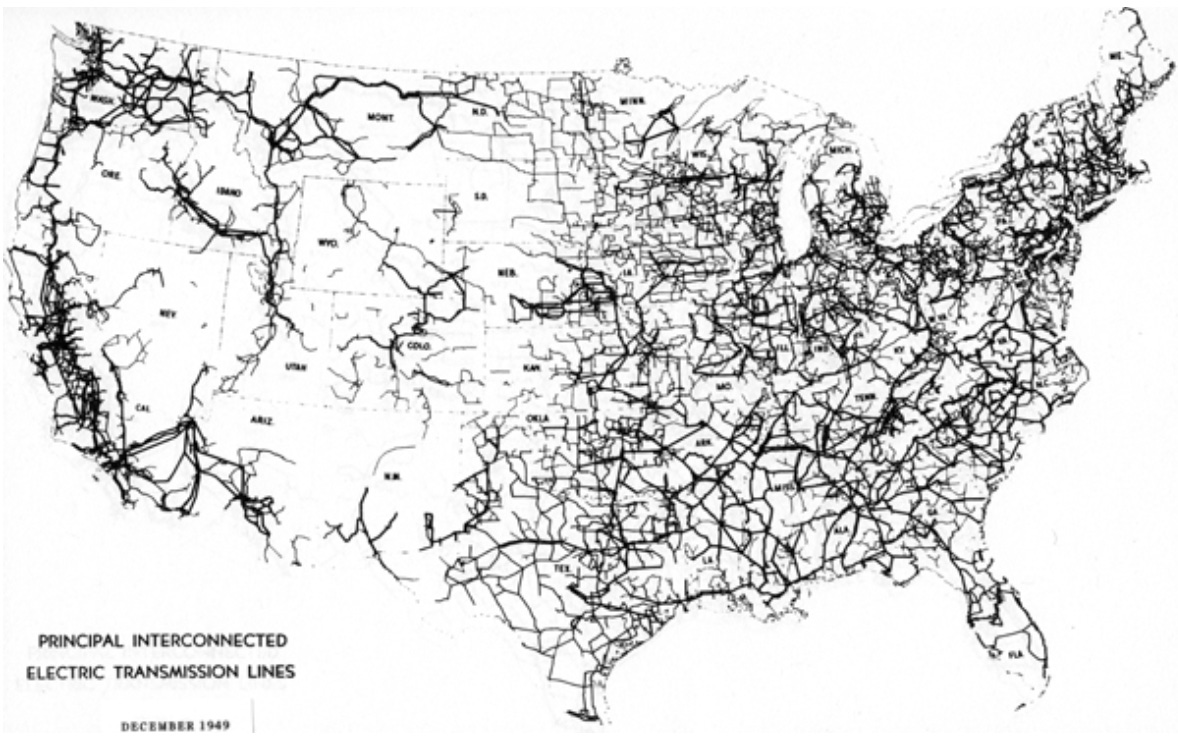

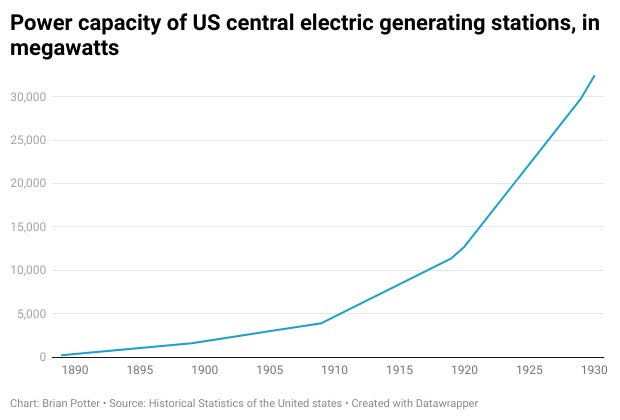

Electricity’s transition from a luxury good to the foundation of modern life happened quickly. By 1930, electricity was available in nearly 70% of US homes, and supplied almost 80% of industrial mechanical power. By 1950, the US was tied together by an enormous network of high-voltage transmission lines.

How did electrical power become ubiquitous? How was the system for distributing it, which makes modern civilization possible, built? Let’s take a look.

The incandescent lamp

The origins of the modern electrical system can be traced to the invention of the incandescent lamp in 1879.

Using electricity to generate light predates the incandescent lamp. Arc lamps, which generate light by creating an electrical arc between two electrodes, were used for lighthouses as early as 1858, and for street lighting by 1876. By 1878, the streets of Paris were lit by arc lamps, and that same year, inventor Charles Brush began installing electric arc lighting systems in US cities such as Boston, Philadelphia, New York and San Francisco.

(Arc lamps weren’t the only user of electric power prior to the incandescent lamp. In the early 1880s electric power, mostly provided by batteries, was also used for “a host of other industries” (Jonnes 2003), such as telegraphs, telephones, stock tickers, and burglar alarms).

Although arc lamps were useful for lighting streets and the interior of large buildings, they produced an incredibly intense light that couldn’t be looked at directly, and which was too bright to be used in homes and smaller businesses. Instead, homes in urban areas typically used gas lighting, which came with several drawbacks. Gas lights gave off a low quality light that made it impossible to distinguish between green and blue, and each lamp had to be individually lit and snuffed out. The emissions from the burning gas would blacken the inside of the lamp—and the interior of the room they were in—over time, and crowded rooms with gas lighting “could quickly become deficient in oxygen and make people feel ill” (Klein 2008). And if a gas light was extinguished without turning off the gas, it could potentially asphyxiate its owner.

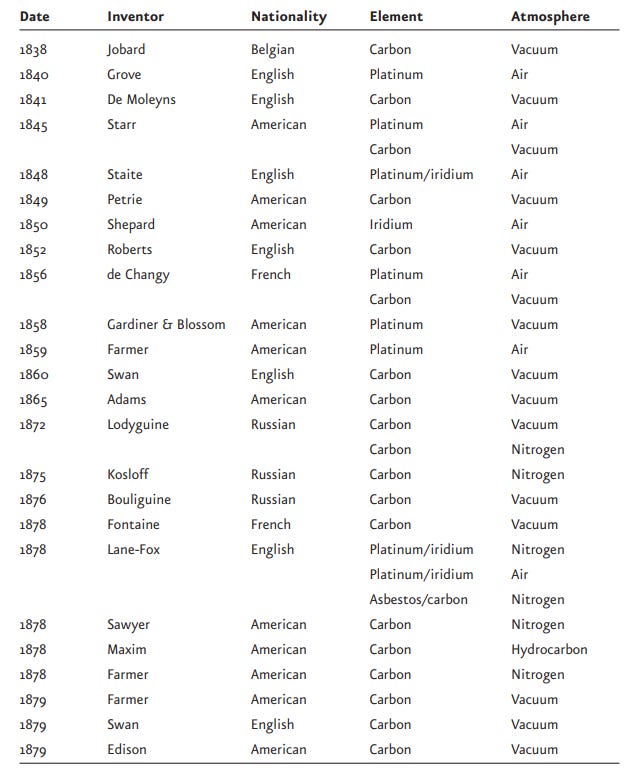

Electric lights were a potential solution, but if they were going to be used in homes they needed to be much less bright than arc lamps. This problem, which became known as “subdividing the light”, was the task that Thomas Edison set himself to in 1878. Rather than an electric arc, Edison aimed to generate light by incandescence - heating a resistive material by running electrical current through it, until it became hot enough to emit light. Electrical incandescence was first demonstrated in 1761, and in 1802 scientist Humphry Davy created an incandescent light that ran electrical current through a thin strip of platinum. Prior to Edison, there had been at least 20 attempts to try to create a practical incandescent lamp.

Edison succeeded where his predecessors had failed by creating a higher vacuum in the bulb than had previously been possible, and by using a high-resistance carbon filament (Freidel et al). The result was “a bright, beautiful light, like the mellow sunset of an Italian autumn” (Jonnes 2003) that lasted long enough to be practical.

But the incandescent lamp wasn’t worth much without a way to deliver electricity to the bulb. So Edison devised an entire electrical generation and distribution system to power the lamps based around a central power station for customers in the surrounding area. The use of a high resistance filament in the bulbs, which allowed light to be generated with a comparatively small amount of electrical current, was crucial to Edison’s plans. By limiting the amount of current, the size (and thus expense) of the copper wires connecting the lamps to the power station was minimized, making it possible for incandescents to be competitive with gas lights.

Edison’s first central generating plant in the US came online in 1882 at Pearl Street in New York City. It was powered by coal-fired reciprocating steam engines connected to six 100 kilowatt generators designed by Edison. 1284 incandescent lamps were originally connected to the station (though only 400 were lit on the first day), and over the next several months another 2,000 lamps were added. By the following year, the Pearl Street Station was powering over 10,000 incandescent lamps.

In addition to central stations, Edison also built “isolated” power stations that provided power to a single customer. These dedicated plants initially proved much more popular than central stations, as it was often easier to convince a single customer of the benefits of electric lighting than it was to sign up many customers for a central station. By 1886 Edison’s company had installed 58 central stations and 702 isolated stations, which combined powered over 300,000 incandescent lamps.

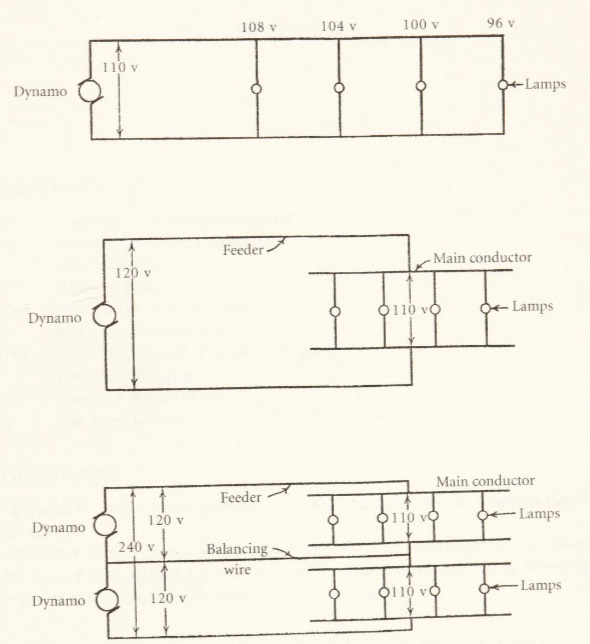

Edison was determined to pursue the central station model and continued to look for ways to lower the cost of centralized power by reducing the amount of copper—by far the most expensive element—used in the system. At first, Edison planned his electrical networks as a “feeder and main” system, which reduced the cost of copper wires by 87% compared to the previous layout. This was followed by his “three-wire system,” which reduced copper usage by another 62%. Following the development of the three-wire system, the central station business underwent a huge expansion and central stations “began to be built in great numbers throughout the world.” By 1891, Edison and his two main competitors (Westinghouse and Thomson-Houston) had installed more than 1,100 central power stations.

The war of the currents

Edison’s electrical system was a direct current (DC) system, meaning current ran continuously in one direction over the circuit. But a DC system had a major flaw: it couldn’t supply power economically more than a mile from the power plant. The reason for this is that when electrical current flows through a wire, some power is lost as heat. As a wire gets longer, this resistance increases and so does power loss. Power losses in a wire are equal to the resistance times the current squared, which means high voltage/low current transmission will have much less power loss than low voltage/high current transmission. (Current is the flow of electrical charge and voltage is the difference in electric potential between two points. If we analogize this to flowing water, current is equivalent to the volume of water flow and voltage is equivalent to the water pressure).

Edison’s lamps, however, required a comparatively low voltage of around 110 volts. And with DC power, there is no easy way to raise or lower the voltage, making it impossible to transmit power at high voltage and low current. Thus, the only way to reduce power losses over long distances was by using larger wires with less resistance, which made the transmission system prohibitively expensive.

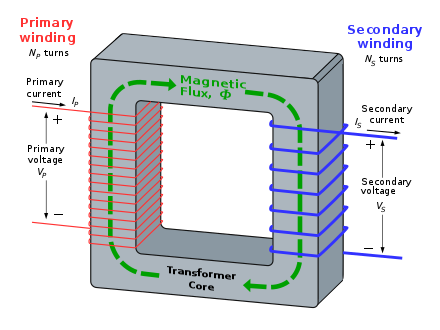

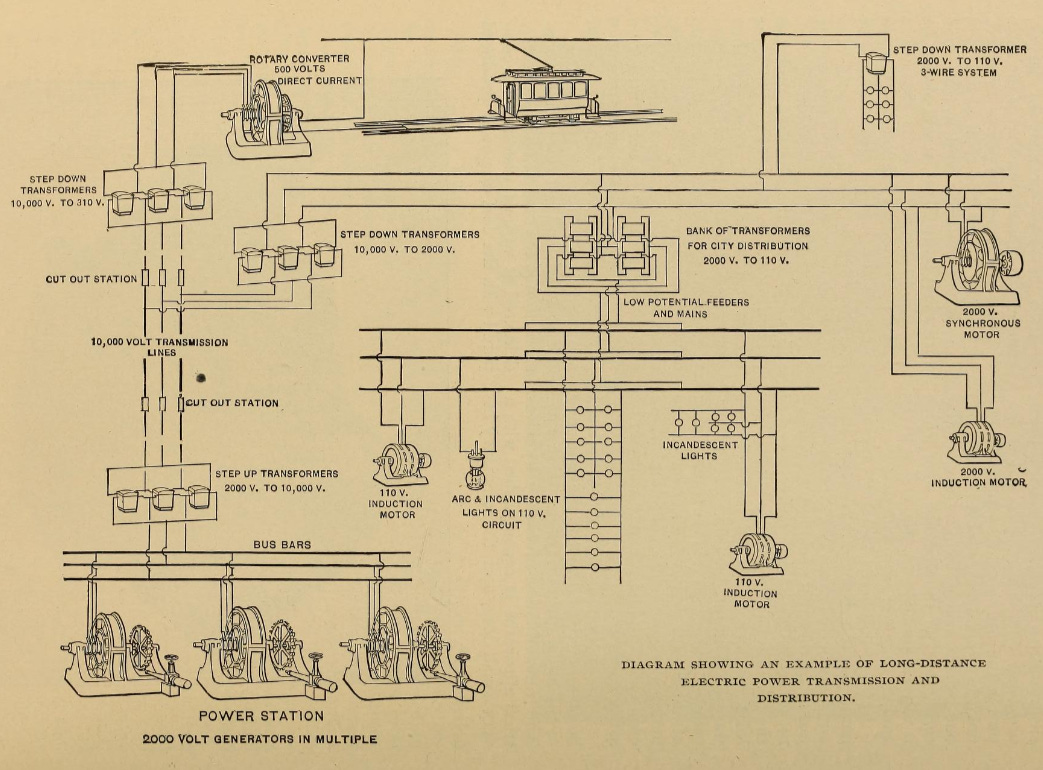

But an electrical system based on alternating current (AC) doesn’t share this drawback. Unlike DC power, with AC power the flow of current oscillates back and forth - in our water analogy, this would be like water moving back and forth in a pipe. This oscillation makes it possible to step up or step down voltage, using a device called a transformer. With an AC system, it was possible to economically transmit power much longer distances by generating power at a low voltage, stepping it up to a high voltage for transmission, then stepping it back down again prior to use.

But Edison was committed to DC power. For one, most of his patents were for DC systems (though he obtained rights for a Hungarian AC system called the ZBD system in 1886). For another, Edison believed that high-voltage AC transmission was fundamentally unsafe compared to low-voltage DC wires that could be touched without fear of injury. His fears weren’t unfounded. Dangling power lines from electric arc systems (which operated at several thousand volts) often caused injuries and deaths, and it wasn’t uncommon in the late 1880s to read stories in New York newspapers about cases of “death by wire”.

There were also other disadvantages to AC power. AC generators were less efficient than DC generators, and initially there was no way to meter AC electricity use. And most critically, in the 1880s no one had built a practical AC motor. Nevertheless, most places in the US lacked the dense population centers that worked best with DC central plants and the prospect of efficiently transmitting power long distances was tantalizing to Edison’s competitors.

In 1885 Westinghouse acquired the rights to the Gaulard-Gibbs transformer, redesigned it to be cheaper to manufacture, and in 1886 installed its first AC system in Massachusetts. That same year Thomson-Houston constructed an experimental AC transmission system and began selling AC generators. And in 1888, Nikola Tesla unveiled an AC induction motor (which was quickly acquired by Westinghouse), removing one of the major limitations on using AC power.

Edison responded to his competitors’ advances in AC power with patent infringement lawsuits, pamphlets warning about the dangers of high-voltage AC power, lobbying legislatures to ban high voltage transmission, and encouraging the use of alternating electrical current as a method of execution for New York (replacing death by hanging). But the advantages of AC power, particularly in smaller, dispersed municipalities were significant, and it continued to gain in popularity. In 1890 roughly 10% of central stations provided AC power; less than a decade later, that had risen to 43%.

The war of the currents ultimately ended with a whimper. In 1888 Charles Bradley invented the rotary converter, which made it possible to convert from AC to DC, allowing both types of current to be used in the same system. Four years later, Edison’s electrical manufacturing company, the Edison General Electric Company, merged with Thomson-Houston to form General Electric (GE). After the merger Edison left the company to pursue other work, and GE increasingly pursued AC systems. Over the next few decades, AC gradually replaced DC power and by 1917 95% of central stations were generating AC power (though some municipalities continued to provide DC power to customers well into the 2000s).

Hydroelectric power and long-distance transmission

To minimize transmission losses, direct current power plants (which typically used coal fired steam engines) needed to be built as close to their customers as possible. But alternating current and the transformer made it cost effective to transmit high-voltage electric power long distances, which opened up a new frontier for hydroelectric power generation. Hydroelectric generators have been operating in the US since the late 1870s, but these plants generally provided low-voltage DC power to nearby users. With the advent of high-voltage transmission lines, however, remote sources of hydropower could be tapped, and power providers began building hydroelectric power plants connected to far away customers.

In 1889 Charles Brush’s company built the first long distance transmission line in the US. It was a 4,000 volt DC line in Oregon that connected a power station at Willamette Falls to an arc lighting system in Portland. A year later, the first long distance AC transmission line in the US, also operating at 4,000 volts, was built at the same location. 4,000 volts was high compared to the 110 volts of Edison’s DC system, but not any higher than the voltages required for existing arc lamp systems. But in 1891, the world's first true high voltage line was built in Germany. By stepping up the power to an unprecedented 25,000 volts, the line was able to successfully transmit power 175 kilometers from a hydroelectric plant in Lauffen to Frankfort. Power companies in the US took note, and by 1900 the US had 15 long distance transmission lines connected to hydroelectric power plants that operated at up to 40,000 volts.

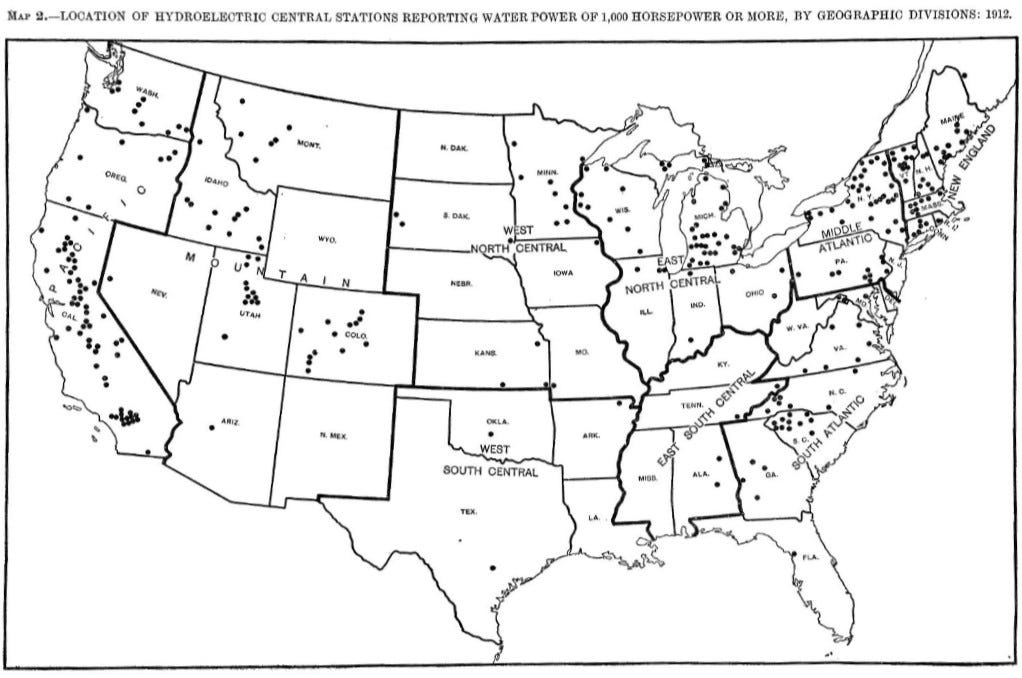

The most notable of these was the enormous Edward Dean Adams plant at Niagara Falls, which was the first large-scale power plant in the world. Completed in 1896, its generators had 11.1 megawatts of capacity, nearly 20 times the size of Edison’s Pearl Street Station (later additions would bring this up to 74 megawatts). By 1912, there were 225 hydroelectric central power stations in the US, and 49 of the world’s 55 transmission lines operating at or above 70,000 volts were connected to hydroelectric power plants.

From lighting to a universal system

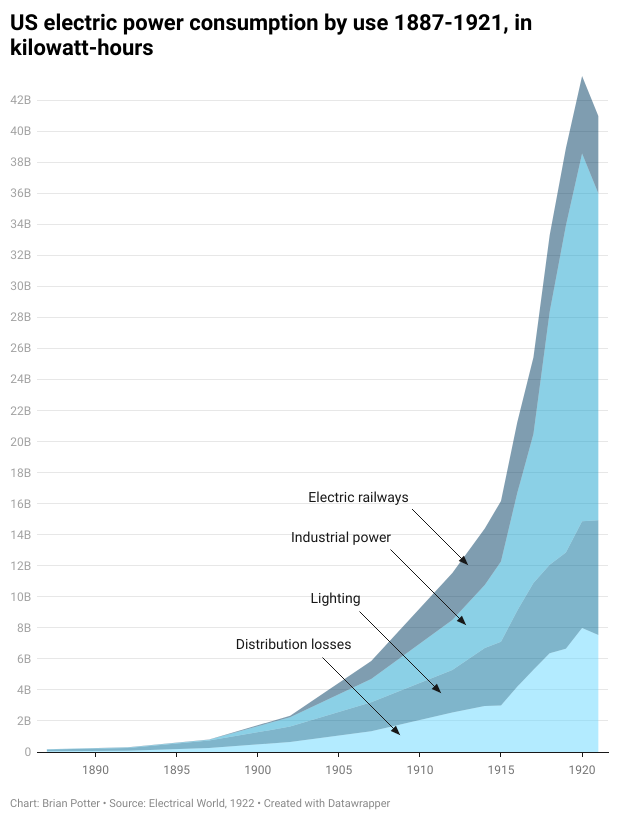

The incandescent lamp was enormously popular and for many years it was the primary driver of electric power use. By 1902 there were an estimated 18 million incandescent lamps in the US, a 500% increase in just 12 years. As late as 1923 electric lighting made up more than 75% of Chicago’s residential electricity consumption, but the incandescent lamp was closely followed by other uses for electric power. One important early application was the electric streetcar. In 1887 the US had just 35 miles of streetcar line, but by 1902, there were more than 800 electric streetcar systems in the US running on 22,000 miles of track. By the end of the decade, electric railways were the second largest consumer of electricity in the US.

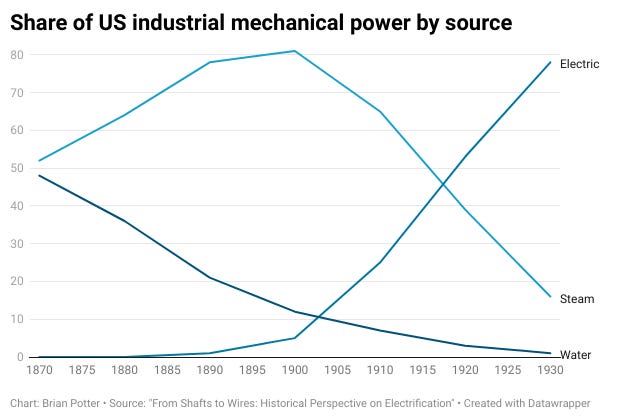

Manufacturing also began to make use of electric power, but industry lagged behind residential and municipal adoption. As late as 1900, only 5% of industrial mechanical power was provided by electricity, but it was increasingly evident that electric power had many benefits in an industrial setting. With steam engines, which dominated the industrial power supply in the early 20th century, equipment was typically powered by connecting the engines to overhead rotating drive shafts, which were connected to equipment using belts and pulleys. But with electric power, this cumbersome system could be eliminated, yielding a host of benefits:

First, electricity was more efficient. Much less power was wasted because, unlike the constant power losses from friction, it could be transported short distances over wires with negligible losses. Second, electricity was more reliable. Providing power directly to each machine eliminated the slight wobbles and fluctuations inherent in belt- driven systems and ensured that energy levels would not fluctuate based on the actions of neighboring workers. Such control could help achieve the long-held dream of manufacturing interchangeable parts. Third, electricity was more flexible. The line-drive system required all of a company’s work operations to be laid out in linear paths connected to the overhead belts. To minimize power losses, factories were often dense, multilevel structures where the most energy- intensive operations were located closest to the power source. Electricity could be delivered anywhere in whatever amounts were desired, freeing operators to organize production according to the flow of parts, not the transmission of power. - Routes of Power

By 1914, electricity supplied almost 40% of the mechanical power used by US industry, and by 1920, manufacturing was using more electricity than all other users combined.

In the early years of electrification, different uses of electric power required separate circuits and separate electrical generators. A typical power station might have “many engine-driven arc-light machines, each with its own independent circuit; direct current generators supplying nearby incandescent lamps; 500-volt generators for street railways; and alternators for remote lighting.” (Chesney 1936). But the fact AC systems could modulate their voltage and be converted into direct current created the potential for a “universal” electric system where power would be centrally generated from a single source, stepped up to a higher voltage to transmission, and then converted to the necessary current and voltage for the end user. By the end of the 1890s, universal systems based on alternating, three-phase current (the electrical power system we have today) were becoming increasingly popular.

Scale and the birth of the grid

In the early 1890s, electricity was still a rare luxury. In 1892, less than 0.5% of Chicago’s population—some 5,000 people—had electric lights. But electricity demand was growing enormously. During the last decade of the 19th century, the capacity of central generating plants in the US increased by more than a factor of 9, and by 1902 there were over 3,600 central generating plants in the US. Only a decade later, there were more than 5,000 central plants powering more than 75 million incandescent bulbs. At the time, it was estimated that the electric power industry needed $2 billion for capital expenditures over the next five years ($61 billion in 2023 dollars), making the electric power industry second only to the railroad industry in capital requirements. As one financier observed at the time, the amount of money required by the burgeoning US electrical system was “bewildering” and “sounded more like astronomical mathematics than totals of round, hard-earned dollars” (Hirsh 1999).

Outside of hydroelectric plants, most electric plants used generators driven by coal-fired reciprocating steam engines until the early 1900s. But in the face of continuously rising demand for power, reciprocating engines were increasingly inadequate. Engine components “became so huge that transportation and installation had become almost impossible. And its noise, smoke, and throbbing motions often caused nearby residents to complain” (Hirsh 1989). This soon led electric power companies to switch to smaller, more fuel efficient steam turbines, which had first been invented by Charles Parsons in 1884. Among the first to order a steam turbine for an electric power plant was Samuel Insull, who ordered a 5,000 kilowatt steam turbine for his Chicago facility in 1903. Within a year of Insull’s order, Westinghouse and General Electric had US orders for 540,000 kilowatts of steam turbines.

As electric power providers adopted steam turbines, and as demand for electric power continued to increase, the industry became driven by the inexorable logic of scale. Not only were steam turbines more efficient than reciprocating engines, but they got more efficient the larger they became. Larger power plants could thus provide electricity more cheaply, provided they had enough customers to sell it too. Moreover, because electricity couldn’t be cheaply stored, it needed to be produced at the moment of consumption. The required generating capacity for a power company was thus driven by peak demand. Larger numbers of customers, particularly different sorts of customers that used electricity at different times, would smooth out the peaks, and thus require proportionally less generating capacity per customer. Electric streetcars, for instance, largely used electric power at different times than incandescent lights. Electric power company owners like Insull deliberately courted streetcar companies and other similar customers to increase their plant’s load factor - the fraction of time that equipment was generating electricity. This allowed him to spread out the fixed costs of the power plant and the lower the average cost of production.

Although hydroelectric plants could generate large amounts of electric power, the amount of power they produced fluctuated over time as river flow rates rose and fell. For example, the Susquehanna river, which became the site of an enormous hydroelectric plant, had a peak flow rate more than 300 times higher than its lowest flow rate. At peak times, huge numbers of customers would be required to take the load. At low times, the drop in generating capacity needed to be made up by coal-fired plants. Large hydroelectric facilities thus worked best if they were tied into a large network of many customers and many other power plants.

The need for scale pushed the electric power industry to adopt a “grow and build” strategy defined by building larger, more efficient power generating stations and connecting them to as many customers as possible. The maximum size of power plant turbines rose from 1.5 megawatts in 1900 to 208 megawatts in 1930. By the 1920s, grow and build “appeared to be the only possible and logical approach for running a utility company” (Hirsch 1999).

Increasing scale was partly achieved by consolidation. In Chicago, for instance, Insull’s Commonwealth Edison company grew by acquiring many of its competitors, and slowly expanded its reach to smaller communities around Chicago. By 1930, Insull-owned companies operated in 32 states and provided more than 10% of the US’ electric power, with transmission lines linking together “more than a thousand service areas” (Lambert 2015). In Boston, the Boston Edison company likewise grew by acquiring smaller power providers. In California, Pacific Gas and Electric (PG&E) acquired or merged with more than two dozen companies, and by 1914 consisted of “virtually a single operating unit of interconnected plants” (Hughes 1983), supplying 1.3 million people with power across 30 counties.

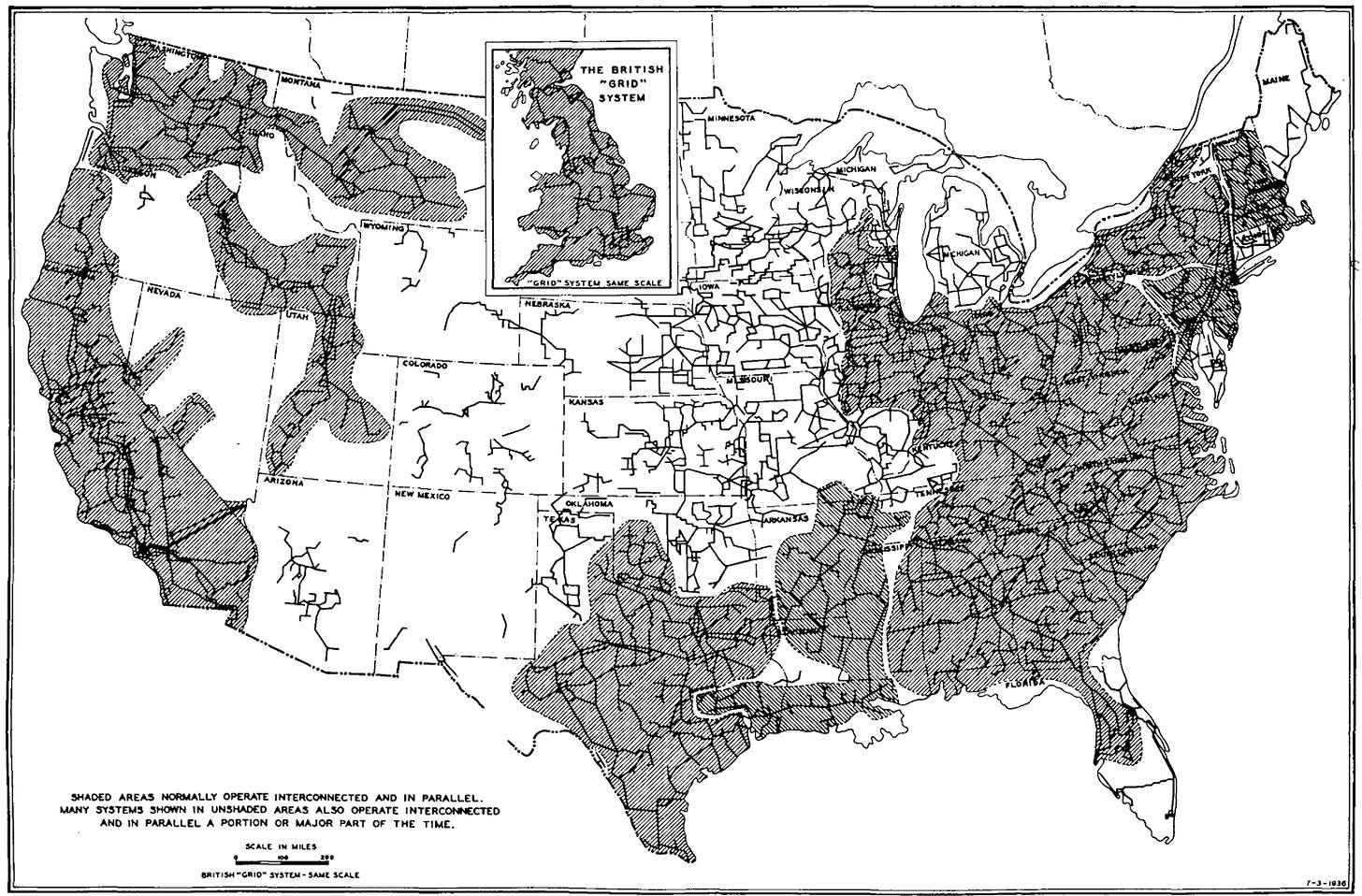

In other cases, scale was achieved through cooperation. Electric power companies would sometimes pool their resources and build a single large power station that was more efficient than multiple smaller stations. In 1915, two midwest power companies built a large coal plant at Wheeling, West Virginia, and connected it to their systems in Ohio and Pennsylvania. The Windsor coal plant, which was built at the mouth of a coal mine to minimize the costs of coal transportation, was expected to be “the most economical electric generating station ever built” (Cohn 2017). In 1921, the Philadelphia Electric Company built the huge Conowingo hydroelectric plant on the Susquehanna River. In order to make use of its maximum capacity, PEC linked its grid with two other companies to form the Pennsylvania-New Jersey (PNJ) interconnection, a single integrated power system with more than 1,500 megawatts of electric power capacity. Even companies that didn’t invest in shared generating plants began to interconnect their electrical transmission systems to share power and get the benefits of scale. By 1929, out of 200 electric utilities providing power in 11 northeastern states, 45% of them were interconnected.

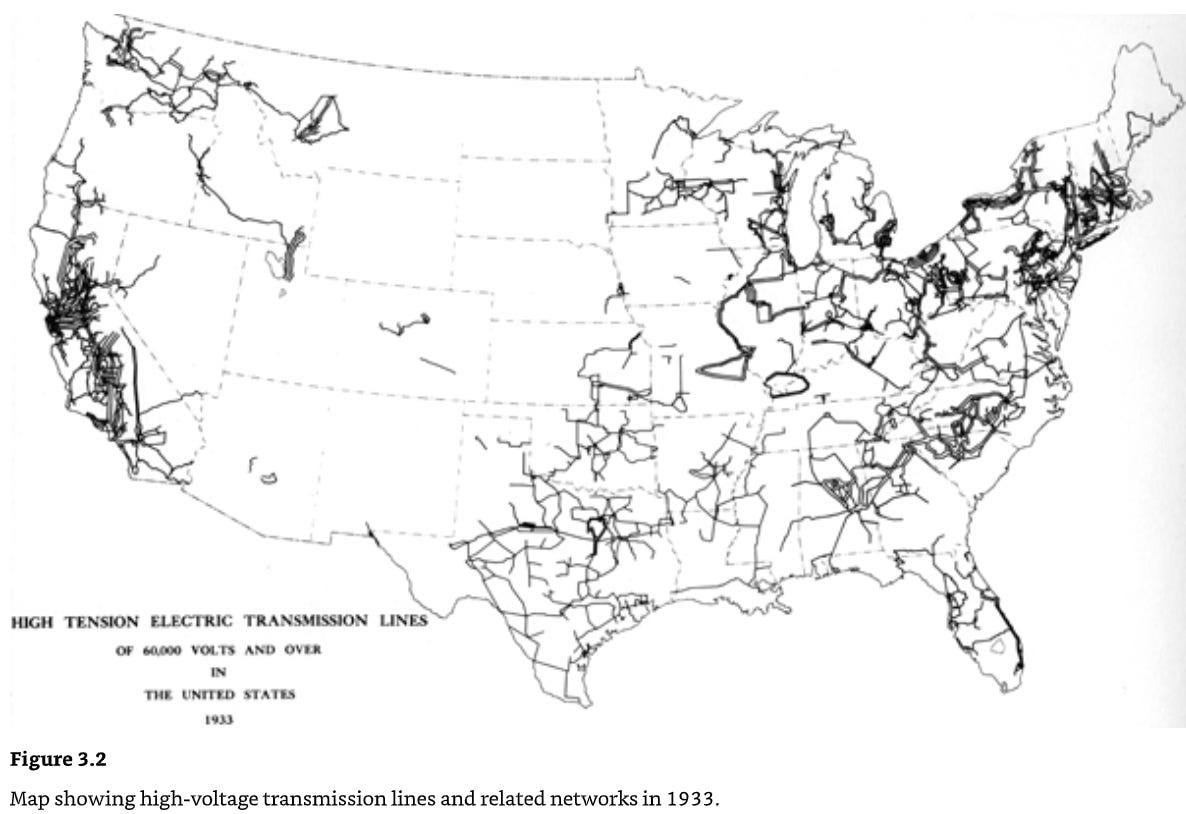

Whether by cooperation or consolidation, expanding electrical service required the construction of thousands of miles of high-voltage transmission lines. High-voltage transmission lines made it possible to generate power in large, efficient electrical plants and send that power to far away customers. They also made it possible to tie together different service areas, smoothing out peaks of demand and allowing power to be shared during emergencies. In 1914, there were about 3,200 miles of transmission lines above 70,000 volts in the US. By 1937, that had increased to more than 35,000 miles.

As power plant size increased, so did transmission voltages, which further reduced power losses and made longer distance transmission possible. In 1901, the highest transmission line voltage in the US was 60,000 volts. By 1934, that had reached 287,000 volts. These transmission lines tied together the thousands of electrical service providers in the US into huge interconnected systems that spanned thousands of square miles and connected millions of customers.

The enormous benefits of scale, and the enormous costs of competition (due to the duplication of expensive infrastructure) led electric power providers to be considered “natural monopolies.” Rather than competing against each other, electric power providers accepted “state regulation of rates of return, in return for monopoly control of regional markets.” (Cohn 2017). By 1915, 41 states had state-level utility commissions that regulated electric power companies, and by the 1930s electric utilities largely operated in distinct geographic areas where there was “little duplication of service.” In fact, by 1936 fewer than 1% of communities with electric service were served by more than one utility. This monopoly status was accepted in part because of the steadily falling costs of electricity. Between 1890 and 1920, the cost per kilowatt-hour of electric power fell by over 80% in real terms.

By 1929 the US generated more electric power than the rest of the world combined , and electric power had become firmly embedded in the American way of life. Close to 70% of homes had access to electricity, which they used to power lights and an increasing range of electrical appliances. By 1929, nearly 40% of households had electric washing machines, and by 1935 “the adoption of electric irons was nearly universal, while about half of American households had vacuum cleaners, washing machines, toasters, and clocks. Roughly a third of Americans had refrigerators, and many owned waffle irons, ranges, hot plates, and heaters.” (Jones 2014). In industry, the amount of electric power per worker increased by a factor of 30 between 1899 and 1925, and by 1930 electric power supplied nearly 80% of industrial mechanical power. Electric power literally transformed the factory floor, enabling Ford’s assembly line and new types of industrial processes such as the Hall-Heroult process for cheaply making aluminum.

But this wasn’t the end of America’s transmission story. It had only set the stage or the electric power industry to grow even larger.

This will continue next week with part II

Sources

Books

The Power Makers - Maury Klein 2008

Empires of Light - Jill Jonnes 2003

The Grid - Julie Cohn 2017

Networks of Power - Thomas Parke Hughes 1983

Technology and Transformation in the American Electric Utility Industry - Richard Hirsh 1989

Power Loss - Richard Hirsh 1999

Turning Points in American electrical history - James Brittain 1977

Edison’s Electric Light - Robert Friedel and Ben Israel 2010

Central station electric service - Samuel Insull 1922

Electric Power System Basics for the Nonelectrical Professional

The Power Brokers - Jeremiah Lambert 2015

Routes of Power - Christopher Jones 2014

Electrifying America - David Nye 1990

Papers and other sources

Central electric light and power stations and street and electrical railways with summary of the electrical industries - US Census 1915

From Shafts to Wires - Devine 1983

Edison’s Three-wire system of distribution - Paul 1884

Hydroelectric power: The first 30 years - Allerhand 2020

Cassier’s magazine issue on Niagara power - 1895

Results accomplished in distribution of electric power by alternating currents - Emmet 1896

Interconnected Electric Power Systems - Sporn 1938

Advantages and possibilities of electric power line interconnections

A Contrarian History of Early Electric Power Distribution - Allerhand 2017

Early History of the A-C System in America - Chesney and Scott 1936

The making of an industry: electricity in the United States - Granovetter and Mcquire

From novelty to utility - Usselman 1992

The Birth of an Industry - Lieb 1922

The Economics of Gateway Technologies and Network Evolution: Lessons from Electricity Supply History - David and Bunn 1988

Materials for Incandescent Lighting - Anderson 1990

For those who found this article interesting, I cannot recommend enough taking a tour of the Niagara Falls power plant on the Ontario side of the Falls. I took my kids there last summer and it was an amazing experience.

More info here:

https://www.niagaraparks.com/visit/attractions/niagara-parks-power-station

This is absolutely outstanding. Thanks for putting this together! Really looking forward to the rest of this series.