Nuclear power currently makes up slightly less than 20% of the total electricity produced in the US, largely from plants built in the 70s and 80s. People are often enthusiastic about greater use of nuclear power as a potential strategy for decarbonizing electricity production, as well as its theoretical potential for being able to produce electricity extremely cheaply. (Nuclear power also has some other attractive qualities, such as less risk of disruption due to fuel-supply issues, which for instance can impact natural gas plants during periods of cold.) But increased use of nuclear power has been hampered by the fact that nuclear power plant construction cost has steadily, dramatically increased over time, frequently over the life of a single project. For instance, in the 1980s several nuclear power plants in Washington were canceled after the estimated construction costs increased from $4.1 billion to over $24 billion (resulting in a $2 billion bond default from the utility provider.) More recently, two reactors in Georgia (the only current nuclear reactors under construction in the US) are projected to cost twice their initial estimates, and two reactors in South Carolina were canceled after costs rose from $9.8 billion to $25 billion. What do we know about why nuclear construction costs are so high, and why they so frequently increase? Let’s take a look.

Power plant and electricity economics

Before we look at construction costs, it’s useful to have some context about the economics of electricity production.

We can roughly break the costs of operating a power plant into three categories - fuel costs, operation and maintenance costs, and capital costs (the amortized cost from building the plant.) Different types of power plants have different cost fractions. For natural gas plants, for instance, up to 70% of the cost of their electricity will be from fuel costs (depending on the price of gas.) With nuclear power, on the other hand, a large fraction (60-80%) of the cost of their electricity comes from capital costs - the costs of building the plant itself. Nuclear plant construction costs thus have a large impact on the cost of their electricity.

Because nuclear plants are expensive, and they take a long time to build, financing their construction can also be a significant fraction of their cost, typically around 15-20% of the cost of the plant. For plants that have severe construction delays and/or have high financing costs (such as the Vogtle 3 and 4 plants in Georgia), this can be 50% of the cost or more.

(Comparison between different types of power plants is often done using “overnight costs”, or the cost to build them if they were built overnight and didn’t have to pay interest charges. Because nuclear plants reliably take longer (sometimes much longer) to build than other types of power plants, this biases comparisons in favor of nuclear plants.)

The cost fractions (as well as technological capabilities) of different types of power plants impact how they get used. Because electricity can’t be cheaply stored, at any given moment the amount of electricity produced has to balance with the amount consumed. Since electricity consumption varies over time, power plants are brought on and offline as demand changes (this is called “dispatch”.) The order plants are dispatched is generally a function of their variable costs of production (with lower cost plants coming on first), as well as how easy it is for them to ramp their production up or down.

Because the cost of nuclear power electricity is largely due to capital costs (that you would be paying for whether the plants produced electricity or not), and because plants in the US have often not been designed to ramp up and down easily, they tend to be operated continuously. Nuclear is sometimes praised for having lower fuel costs, but all else being equal (ie: assuming total production cost stays constant), it’s better to have a larger fraction of your electricity costs be variable, so that if demand drops then production cost drops as well.

(This is another complicating factor in comparing different types of power plants. Comparison is often done by comparing a plant's “levelized cost of electricity” (LCOE), which is the net present cost of the electricity a plant will produce over its lifetime. But the value of the electricity a plant produces is different at different times. For instance, half the time a nuclear plant will be operating at night, when the price of electricity might be lower (depending on the utility provider.) By contrast, natural gas peaker plants will be used when demand is unexpectedly high, and the price of electricity is much higher. Intermittent sources such as wind and solar will sometimes produce more electricity than is expected or needed, which can push down the price, in some cases enough that the price actually goes negative. Nuclear, wind, and (sometimes) solar thus might often be selling less valuable electricity than other types of power plants.)

Nuclear power basics

It’s also useful to have a bit of context about how a nuclear plant works. A nuclear power plant is a type of thermal power plant, where a heat source is used to turn water into steam, which then drives a turbine. For nuclear power, the heat source is radioactive nuclear fuel producing a nuclear chain reaction.

Nearly all nuclear reactors in the US (and around the world) are light water reactors (LWR), where the reactor heats a supply of light water (normal H2O), which then transfers its heat to a second source of water, which then drives the turbine (this keeps the irradiated water separated from the rest of the plant.) In a light water reactor, water is also used as the neutron moderator, the material that slows down emitted neutrons so they trigger more fission events.

(Diagram of a pressurized water reactor, via the Department of Energy. The other major type of LWR is a boiling water reactor (BWR), where steam is created in the pressure vessel directly.)

This isn’t the only way of building a reactor, and many experts think that other reactor technology would have been better suited for power station construction. Light water reactors came to dominate because it was the technology being used by the US Navy, who in turn chose it because it had useful properties for ship-based reactors (for instance, another technology being considered used liquid sodium as the reactor coolant. This was ultimately deemed unsuitable for naval reactors, since sodium reacts violently with water.) For historical reasons, it was deemed important to develop the US’s civilian nuclear power sector quickly, and so existing light water reactor technology was used.

A major risk for this type of reactor is a “loss of cooling accident” (LOCA). If a pipe bursts, or the supply of cooling water is otherwise disrupted and isn’t available to cool the nuclear fuel, the fuel can heat up to the point where it melts (a “core meltdown”), potentially damaging the reactor and exposing radioactive material. (This type of failure led to the phrase “China Syndrome”, the idea that in a nuclear meltdown the molten fuel would burn its way through the reactor housing, then the containment, then into the surface of the earth and (figuratively) make its way to China.) Both the Fukushima and Chernobyl power plants experienced core meltdowns, and Three Mile Island experienced a partial core meltdown (that largely remained contained inside the reactor.)

Even after the reactor is turned off, the radioactive materials in the reactor continue to generate heat for an extended period of time (this is known as “decay heat”). Thus even in a disaster that causes the reactor to be shut down, cooling systems are still needed to keep the nuclear fuel cool. This means that cooling systems must be able to handle a wide variety of potential failure modes and environmental conditions - regardless of what happens to the reactor or the plant, the cooling systems must keep working. (As we’ll see, one way of thinking about the steady increase in nuclear plant regulations is that they’re the result of constantly learning new things that can happen to a reactor.)

Nuclear power costs

The story of nuclear power plants in the US is of rapidly rising costs to build them. Commercial plants which started construction in the late 1960s had a cost of $1000/KWe or lower (2010 dollars), which rose to $9000/KWe for plants started just 10 years later (both in overnight costs.) Current costs are roughly in-line with this - the Vogtle 3 and 4 reactors, despite costing nearly double what was estimated, are likely to come in at around $8000/KWe in overnight costs (with an actual cost of nearly double that due to financing costs), or $6000/KWe overnight costs in 2010 dollars.

The US seems to do especially badly here, but most other countries have seen steadily rising costs. Here’s French costs (which some experts suggest is an underestimate):

And here’s German and Japanese costs:

Most countries seem to show a similar pattern of increasing costs into the 80s, after which costs level off (though the new Flamanville 3 reactor in France seems like it will come in at approximately $12,000/KWe, or the equivalent of ~$4000/KWe in 2010 overnight-costs - double what the French were able to achieve previously.)

The only country where the costs of nuclear plant construction seem to have steadily decreased is South Korea:

The fact that South Korea is the only country to exhibit this trend has led some experts to speculate that the cost data (which comes directly from the utility and hasn’t been independently audited) has been manipulated and we shouldn’t draw conclusions from it.

Most countries do show early cost decreases if you include the costs to build early small-scale demonstration and test reactors, though it’s unclear how relevant this comparison is to full-scale commercial operating plants (and these costs are often unknown and must be estimated.) In practice, these reactors often get excluded from datasets tracking cost changes.

Because plants take so long to build, these cost increases tended to be seen on in-progress plants - a 1982 analysis found that the final construction cost for US plants ended up being anywhere from 2 to 4 times as high as the initial estimated cost:

What does that money get spent on when building a plant?

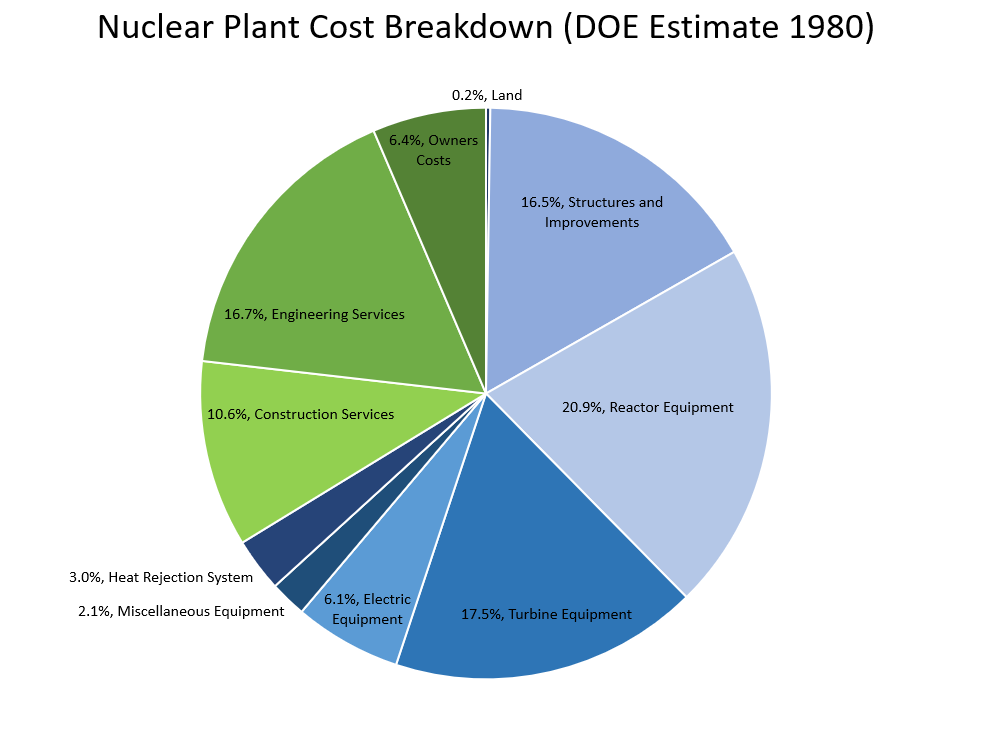

There are a variety of cost breakdowns of the cost of a plant available (some of which are summarized here.) We’ll look at a breakdown done by the DOE in 1980 for a hypothetical 1100 MW plant, which (theoretically) should reflect the costs of US plants during the era when most of them were being built. (Note that this excludes financing costs.)

Roughly 1/3rd of the costs are “indirect costs” - engineering services, construction management, administrative overhead, etc. For the direct costs, the reactor, the turbine equipment, and the plant structures each make up a similar fraction, with the balance made up by additional plant systems. Also note that the engineering design for the plant costs nearly as much as the reactor itself.

One thing that this makes clear is that nuclear plants are very labor intensive to build (with probably at least 50% of the cost from indirect costs and on-site labor), which suggests that construction cost comparisons between countries probably need to be wage-adjusted to be relevant (for some reason it seems like most comparisons don’t do this.)

Rising labor costs

So costs, especially in the US, increased dramatically over a relatively short period of time. Nuclear plant construction is often characterized as exhibiting “negative learning” - instead of getting better at building the plants over time, we’re getting worse (in terms of cost to build them, at least.) What do we know about why costs increased?

During the 70s and 80s, most of the cost increase can be attributed to increased labor costs - an estimate by United Engineers and Constructors found that between 1976 and 1988, labor costs to build a plant escalated at 18.7% annually, while material costs escalated by 7.7% annually (against an overall inflation rate of 5.5%.) Of those labor costs, over half were due to expensive professionals - engineers, supervisors, quality control inspectors, etc.

Other estimates seem roughly in line with this. A 1980 estimate produced by Oak Ridge suggests that material volume increases between the early 1970s and 1980 generally ranged from 25-50% (not nearly enough to account for the cost increases seen):

And a recent paper by Eash-Gates et al examined (among other things) cost increases for a sample of nuclear power plants built between 1976 and 1988. They found that 72% of the cost increase was due to indirect costs (and of the direct costs, some fraction of the increase would be from labor), which also indicates a large increase in expensive professionals such as engineers and managers:

One large cause (and effect) of cost increase seems to be from the increased time it takes to build the plants - estimated time to build plants increased from just over 5 years in the late 60s to 12 years in 1980. This increases financing and labor costs, as well as increasing the probability for something to negatively affect the outcome (new regulations which must be incorporated, new objections from citizens, changing energy landscape which makes folks question whether the plant is needed, etc.) Some of this increase was the result of the Calvert Cliffs court case, which mandated that an environmental impact review must be performed for every plant built.

Regulation increase and project disruption

Why did labor costs increase? The typical story here is one of increasing regulation making the plants increasingly burdensome to build. During the late 60s and early 70s, nuclear requirements steadily increased:

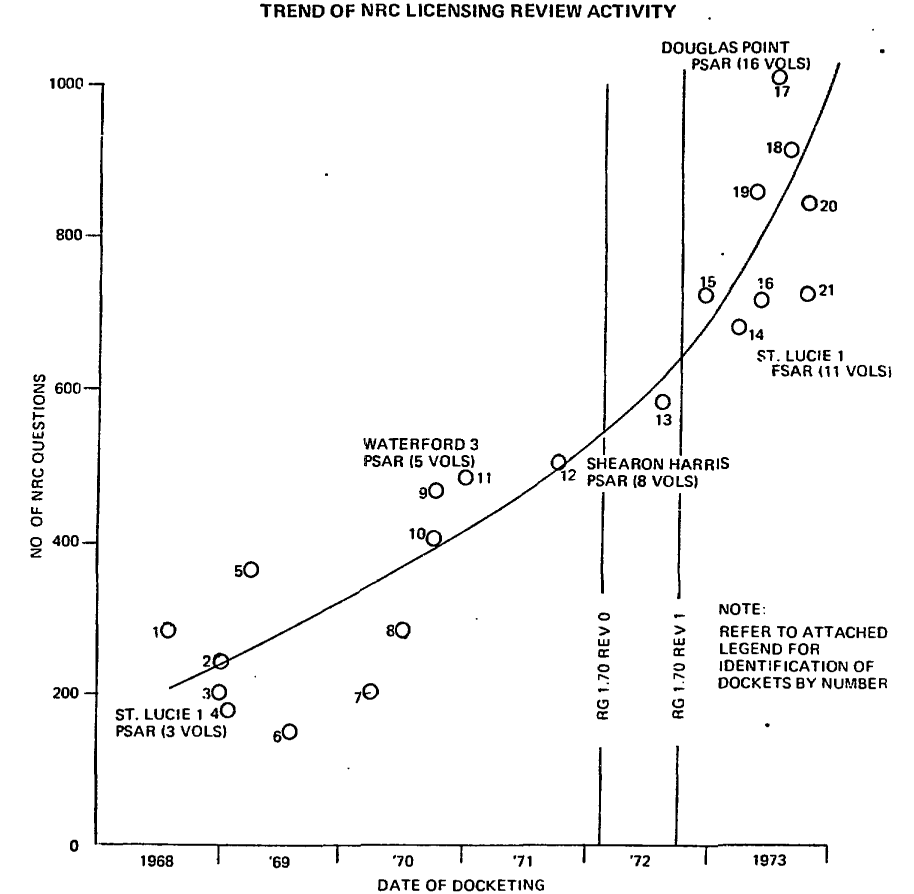

As did the thoroughness of review by the Nuclear Regulatory Commission (the Federal organization responsible for issuing plant operating licenses):

A 1980 study found that increased regulation between the late 1960s and mid 1970s was responsible for a 176% increase in plant cost:

And the previous Eash-Gates study found that at least 30% of the cost increase between 1976 and 1988 can be attributed to increased regulation (and probably much more.)

An overview of the impact of increased regulation is given by Charles Komonoff in his 1981 “Power Plant Cost Escalation”:

One key indicator of regulatory standards, the number of Atomic Energy Commission (AEC) and Nuclear Regulatory Commission (NRC) "regulatory guides" stipulating acceptable design and construction practices for reactor systems and equipment, grew almost seven-fold, from 21 at the end of 1971 to 143 at the end of 1978. Professional engineering societies developed new nuclear standards at an even faster rate (often in anticipation of AEC/NRC. These led to more stringent (and costly) manufacturing, testing, and performance criteria for structural materials such as concrete and steel, and for basic components such as valves, pumps, and cables.

Requirements such as these had a profound effect on nuclear plants during the 1970s. Major structures were strengthened and pipe restraints added to absorb seismic shocks and other postulated "loads" identified in accident analyses. Barriers were installed and distances increased to prevent fires, flooding, and other "common-mode" accidents from incapacitating both primary and back-up groups of vital equipment. Similar measures were taken to shield equipment from high-speed missile fragments that might be loosed from rotating machinery or from the pressure and fluid effects of possible pipe ruptures. Instrumentation, control, and power systems were expanded to monitor more plant factors under a broadened range of operating situations and to improve the reliability of safety systems. Components deemed important to safety were "qualified" to perform under more demanding conditions, requiring more rigorous fabrication, testing, and documentation of their manufacturing history.

Over the course of the 1970s, these changes approximately doubled the amounts of materials, equipment, and labor and tripled the design engineering effort required per unit of nuclear capacity, according to the Atomic Industrial Forum.

These increases often had an especially large impact because they required changes to in-progress nuclear plants:

...because many changes were mandated during construction as new information relevant to safety emerged- much construction lacked a fixed scope and had to be let under cost-plus contracts that undercut efforts to economize. Completed work was sometimes modified or removed, often with a "ripple effect" on related systems. Construction sequences were frequently altered and schedules for equipment delivery were upset, contributing to poor labor productivity and hampering management efforts to improve construction efficiency. In general. reactors in the 1970s were built increasingly in an ''environment of constant change'' that precluded control or even estimation of costs and which magnified the direct cost impacts of new regulations and design changes.

Changes to regulations required design changes to in-progress plants, in some cases requiring existing work to be removed (and likely requiring intervention and oversight from design engineers, managers, field inspectors, and other expensive personnel.) The Eash-Gates study is consistent with this, finding that costs steadily increased even for “standard” reactor designs. A 1978 presentation from a member of the Atomic Industrial Forum argued that ”achieving stable licensing requirements is the clear target for any effort to obtain shorter and more predictable project durations.”

This environment of constant change helps explain the huge increase in labor costs - changing an in-progress plant will add costs and slow down construction, even if the design changes don’t result in substantially more material or equipment use.

A universal tenet of large construction projects (and small construction projects) is that you should avoid making design changes during construction. Changes while a project is in-progress may require existing work to be removed, or new work to be done in difficult conditions. It often requires significant coordination effort just to figure out what work has been done (“Have you poured these foundations yet? Are the columns in yet?”), and what can and can’t be done in that situation. If a pipe needs to run through a beam, it’s easy to design the beam to accommodate that ahead of time. But if it’s a last-minute change, and the beam has already been fabricated, you might have to field cut a hole, or add reinforcing. Or maybe the beam can’t accommodate the hole at all, and you need to redesign the entire piping system (which will of course impact other in-progress work.) And while this expensive redesign is happening, everyone else might need to stop their work.

On a nuclear plant, which can employ up to 5,000 construction workers at a time (one source described planning the temporary construction facilities as equivalent to planning the utilities for a small city), we might expect these sorts of disruptions to be especially severe. A 1980 study of nuclear plant craft workers found that 11 hours per week were lost due to lack of material and tool availability, 8 hours a week were lost in coordination with other work crews or work area overcrowding, and 5.75 hours per week were lost redoing work. All together nearly 75% of working hours were lost or unproductively used.

(This will continue next week with Part II. Thanks to Titus Reed and Austin Vernon for reading drafts of this)

These posts will always remain free, but if you find this work valuable, I encourage you to become a paid subscriber. As a paid subscriber, you’ll help support this work and also gain access to a members-only slack channel.

Construction Physics is produced in partnership with the Institute for Progress, a Washington, DC-based think tank. You can learn more about their work by visiting their website.

You can also contact me on Twitter, LinkedIn, or by email: briancpotter@gmail.com

I’m also available for consulting work.

I know someone who was a nuc engineer on the south carolina project that went bust... One obvious issue that you somewhat touch on is the lack of scale that nuclear power production in the US currently has. For many on the SC project, it was their first experience in construction of a new nuclear powerplant, as the expansion was the first nuclear reactor in the US to start construction in decades. Without volume in nuclear construction projects, there is nowhere to amortize the human capital development costs nor the physical capital costs required for learning such high complexity development . This impacts all levels of the nuclear power plant supply chain, not just the final stage. You cite that 1/3 of costs are services, but my guess is that those are simply the services at the final level. If one were to dig into the other 2/3, what portion of those costs would consist of engineering services and non-fully scaled processes? My guess is that these costs are still very far from the asymptotic minimum of raw materials costs that one can pursue with scale. You can't really change market labor costs but you can change labor productivity and waste. The chinese are of course right in that forcing scale enables buildup of reusable human capital, and repeatable processes, that make marginal construction costs much lower, if done properly. Time for NukeX?

Mass production can lower the per unit cost. Put together a package to build a hundred or so reactors and have the federal government finance it at cost. Standardize everything.