The Age of the Amplifier

As we’ve noted more than a few times before, for most of the 20th century AT&T’s Bell Labs was the premier industrial research lab in the US. As part of its ongoing efforts to provide universal telephone service, Bell Labs generated numerous world-changing inventions, and accumulated more Nobel Prizes than any other industrial research lab.1 But the most important of its technical contributions proved to be useful far beyond the confines of the Bell System. Statistical process control, for instance, was invented by AT&T engineer Walter Shewhart to improve the manufacturing of AT&T’s electrical equipment at supplier company Western Electric. Since then, the methods have been successfully applied to all manner of manufacturing, from jet engines to semiconductors to container ships.

Interestingly, some of AT&T’s most important technological contributions — namely, the vacuum tube, the negative feedback amplifier, the transistor, and the laser — were (in whole or in part) the product of efforts to make new, better amplifiers for boosting electromagnetic signals. Amplifiers played a crucial role in the Bell System, making it possible to (among other things) connect telephones over long distances, but the value of these four amplifiers extended far beyond telephony. The vacuum tube became a crucial building block for electronics in the first half of the 20th century, used in everything from radio to television to the earliest computers. The negative feedback amplifier helped spawn the discipline of control theory, which is used today in the design of virtually every automated machine. The transistor is the foundation of modern digital computing and everything built on top of it. And the laser is used in everything from fiber-optic communications to industrial cutting machines to barcode scanners to printers.

It’s worth looking at why AT&T was so motivated to build better and better amplifiers, and why those efforts produced so many transformative inventions.

The vacuum tube

In 1876 Alexander Graham Bell placed the world’s first telephone call, summoning his assistant Thomas Watson from another room. By 1881, Bell’s company, the Bell Telephone Company (it wouldn’t become American Telephone and Telegraph, or AT&T, until 1899) had 100,000 customers. By the turn of the century AT&T was operating 1,300 telephone exchanges in the US, connecting over 800,000 customers with 2 million miles of wire.

The goal of the Bell System was “universal service” – to connect every telephone user with every other telephone user in the system. But by the early 20th century this quest was bumping up against technological limitations.

Telephones converted the sound of someone speaking to electrical signals, which were transmitted along wires until reaching a telephone on the other end, where they were converted back into sound. More specifically, in early telephones the sound from someone speaking would compress and decompress a chamber full of carbon granules, which would alter their electrical resistance, changing how much current flowed through them. At the other end, the electrical current would flow through an electromagnet, which pulled on a thin iron diaphragm; fluctuations in the electrical current would change the motion of the diaphragm, reproducing the speech.

But the farther electrical signals travelled, the more they would be attenuated. Resistance from the wire carrying them would convert some of the electrical energy into heat, and electrical current could “leak” between adjacent telephone wires. As the electrical signals got weaker and weaker, the sound would be less and less intelligible when reproduced, until it couldn’t be heard at all. If AT&T wanted to provide universal service, it would need a way to maintain the strength of the electrical signal as it traveled over long distances.

AT&T was able to partly resolve this problem using the loading coil, an invention of electrical engineer Michael Pupin. (Lines which had loading coils added to them were sometimes described as being “Pupinized.”) The loading coil added inductance (a tendency to resist changes in current) to telephone lines, which reduced signal attenuation. As a result, the loading coil roughly doubled the effective distance limit of telephone calls, from around 1000-1200 miles to closer to 2000 miles. But the loading coil merely reduced signal attenuation; the signal was still decaying as it traveled along the lines, just more slowly. Without some way of actually amplifying the telephone signals, the maximum distance for a telephone line was enough to connect New York to Denver, but not enough to reach the West Coast from New York and connect the entire country.

AT&T experimented with various mechanical amplifiers, which converted the electrical signals into mechanical movements and then back to electrical signals, but these were found to greatly distort the signal, and were not widely used. What was needed was an electronic amplifier, which could amplify the electrical signals directly, without the lossy and distorting effects of mechanical translation. In 1911, AT&T formed a special research branch to tackle the problem of long-distance transmission, and hired the young physicist Harold Arnold (who would later become the first director of research at Bell Labs) to research possible amplifiers based on the “new physics” of electrons.

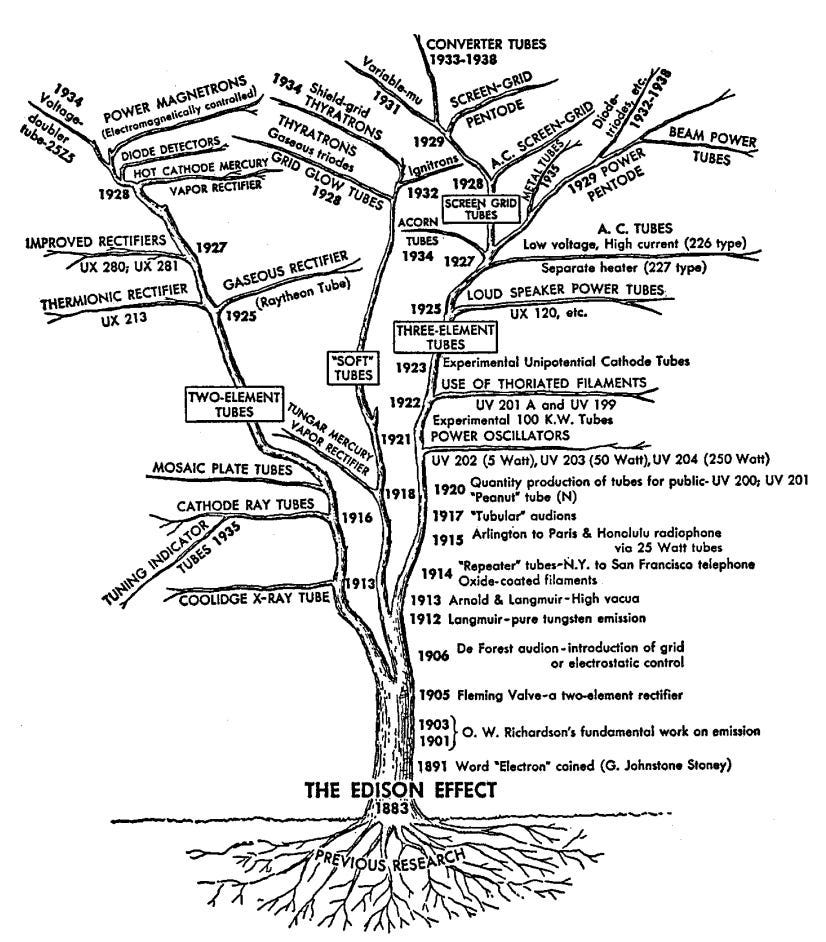

At first, Arnold had little success. He looked at a variety of possible amplifying technologies, and experimented extensively with mercury discharge tubes (which initially seemed promising), but nothing appeared to fit AT&T’s requirements. But in 1912, Arnold learned of a new, promising amplifier known as the audion, which had been brought to AT&T by American inventor Lee de Forest. De Forest’s audion was, in turn, based on an invention of the British physicist Ambrose Fleming, known as the “Fleming valve.” Fleming was inspired by extensive experimentation with what was known as the “Edison Effect:” the observation that in an incandescent bulb, electric current would flow from the heated filament to a nearby metal plate. Fleming used this effect to create a diode, a device which lets electric current flow in one direction but not another. De Forest modified Fleming’s valve by adding a third element, a metallic grid, between the filament and the plate. By varying the voltage at the metallic grid, De Forest eventually discovered he could control the flow of electrical current from the filament to the plate. This allowed the device to act as an amplifier: a small change in the voltage could create a much larger change in the current flowing from the filament to the plate.

De Forest’s audion had uneven performance — notably, it couldn’t handle the level of energy needed for a telephone line. Moreover, it was clear that De Forest did not quite understand how the device worked. But Arnold, well-versed in the physics of electrons, recognized its potential, and realized that, with modifications, its various limitations could be overcome. Via “The Continuous Wave,” a history of early radio:

Arnold knew exactly what to do about the audion’s limitations. “I suggested that we make the thing larger, increase the size of the plate with the corresponding increases in the size of the grid but particularly at that time I suggested that we were not getting enough electrons from the filament.” What he wanted to do, in fact, was convert the de Forest audion into a different kind of device. He wanted a much higher vacuum in the tube, with residual gas eliminated to the greatest possible extent; and he knew the newly invented Gaede molecular vacuum pump made that possible. He wanted more electron emission from the filament without an increase in filament voltage; and he knew Wehnelt’s new oxide-coated filaments would do that.

After paying $50,000 (roughly $1.6 million in 2026 dollars) for the rights to the audion, Arnold and others at AT&T spent the next year turning it into a practical electronic amplifier: the triode vacuum tube. By June 1914, vacuum tube amplifiers were being installed on a transcontinental telephone line connecting New York and San Francisco, and in January of 1915 the transcontinental line was inaugurated at the Panama-Pacific International Exposition with a call between Alexander Graham Bell in New York and Thomas Watson in San Francisco. By the late 1920s, AT&T was using over 100,000 vacuum tubes in its telephone system, and triodes and their descendents (four-element tetrodes, five-element pentodes) would go on to be used in all manner of electronic devices, from radios to TVs to the first digital computers.

The vacuum tube, with its ability to amplify electronic signals, represented a sea change in how AT&T engineers thought about telephone service. Prior to the electronic amplifier, a telephone call was essentially a single diminishing stream of electromagnetic energy. The range of that energy could be extended farther and farther from the speaker at the steep cost of its fidelity. The amplifier made it possible to consider a telephone call as a stream of information, as a signal that was distinct from the medium that carried it. It could be ably renewed, translated, modified in new and exciting ways. As historian David Mindell notes:

…a working amplifier could renew the signal at any point, and hence maintain it through complicated manipulations, making possible long strings of filters, modulators, and transmission lines. Electricity in the wires became merely a carrier of messages, not a source of power, and hence opened the door to new ways of thinking about communications…The message was no longer the medium, now it was a signal that could be understood and manipulated on its own terms, independent of its physical embodiment.

The negative feedback amplifier

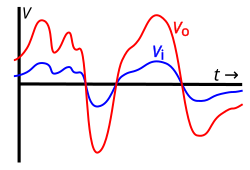

Thanks to vacuum tube amplifiers, AT&T could finally fulfill its dream of universal telephone service, connecting telephones to each other anywhere in the continental US. But vacuum tubes were far from perfect amplifiers. The ideal amplifier has a linear relationship between the input and the output, effectively multiplying the input current or voltage by some value. If this relationship is non-linear, some inputs will be multiplied more than others, and the signal will become distorted. This distortion can garble speech, and — on a wire carrying multiple telephone calls — can create cross-talk, with speech from one telephone call being heard on another.

The vacuum tube was a superior amplifier to anything that preceded it, but it wasn’t a perfectly linear amplifier; its amplification curve formed more of an S-shape, under-amplifying low values and over-amplifying high ones. For a line carrying a single telephone call, the resulting distortion could be mitigated by restricting inputs to the linear portion of the curve, but as AT&T adopted carrier modulation — carrying multiple calls at different frequencies on a single line — distortion became more of a problem.

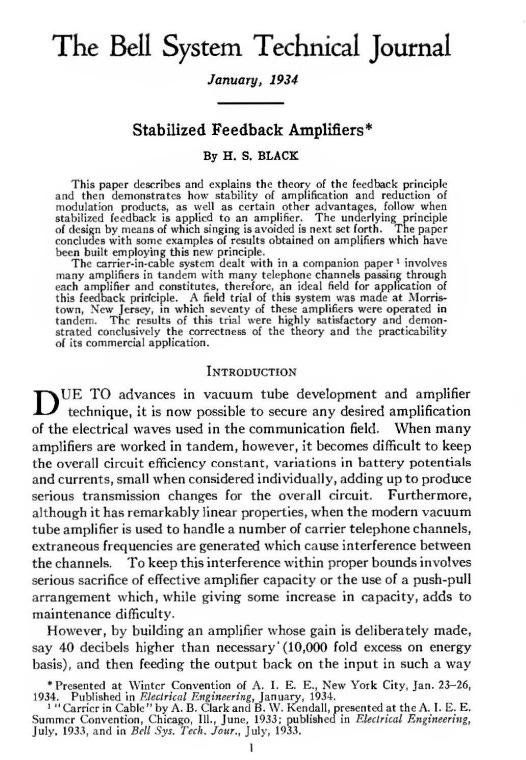

In 1921, Harold Black, a 23 year old electrical engineer, joined AT&T. He soon produced a report analyzing the future potential of a transcontinental telephone line carrying thousands of carrier-modulated conversations. At the time, carrier modulation was being used to carry at most three calls on a single line. Black’s analysis showed that such a line would require an amplifier with far less distortion than existing vacuum tubes,and Black began to work on developing an improved amplifier as a side project.

At first, Black simply tried to create vacuum tubes with less distortion, a project that many others at AT&T were also working on. The efforts of Black and others produced higher-quality vacuum tubes, but nothing Black tried reduced the distortion to the degree he was aiming for.

After two years of failure, Black decided to pivot; rather than trying again and again to build a perfectly linear amplifier, he would accept that any amplifier he made might be imperfect, and instead find a way to remove the distortion that it introduced.

Black first tried to do this by subtracting the input signal from the amplifier’s output, leaving behind just the distortion, and then amplifying that distortion and subtracting that from the output signal. This method — the “feedforward amplifier” — worked, but not well. It required having two amplifiers (one for the original signal, and one for the distortion) that needed to have very precisely matched amplification characteristics, and maintaining that alignment over a wide range of frequencies and for a long period of time proved difficult. As Black noted later:

For example, every hour on the hour —24 hours a day —somebody had to adjust the filament current to its correct value. In doing this, they were permitting plus or minus 1/2-to-l dB variation in amplifier gain, whereas, for my purpose, the gain had to be absolutely perfect. In addition, every six hours it became necessary to adjust the B battery voltage, because the amplifier gain would be out of hand. There were other complications too, but these were enough! Nothing came of my efforts, however, because every circuit I devised turned out to be far too complex to be practical.

Black grappled with the problem over the next several years, until suddenly realizing the solution while taking the ferry to work one morning in 1927. An electromagnetic signal consisted of a wave, alternating back and forth between positive and negative voltage. If you took a fraction of the output from an amplifier, and subtracted that from the input signal before it got amplified — by modifying the input with negative feedback — you would cancel out the distortion. Because this would reduce the strength of the input signal, this would have the downside of greatly reducing the how much the signal would be amplified (known as the gain), but that was ok; the distortion would be reduced so much that you could get as much gain as you needed by stringing several such amplifiers together. And unlike Black’s feedforward amplifier, this negative feedback amplifier would be self-correcting: any unexpected change to the gain in the amplifier would become a change in the feedback signal, compensating for the change.

By the end of the year, Black had built a negative feedback amplifier that reduced distortion by a factor of 100,000. But Black had a difficult time convincing others of the merits of his invention. At the time feedback was largely considered undesirable by electrical engineers. Feedback could cause an amplifier to “sing” and start generating its own output, known as self-oscillation, overwhelming the input signal. (Think of the high-pitched sound that you get when placing a microphone next to a speaker.) Engineers went to significant efforts to prevent feedback-related problems.

At the time it was also believed that an amplifier with high levels of feedback would be fundamentally unstable. Opposition to Black’s amplifier was so severe that securing a US patent required “long drawn-out arguments with the patent office,” and the British patent office treated the invention the way they treated perpetual motion machines, demanding a working model. Harold Arnold, who had since become director of research at Bell Labs, “refused to accept a negative feedback amplifier, and directed Black to design conventional amplifiers instead.”

In practice, keeping the amplifier stable (avoiding self-oscillation) while also stringing together amplifiers in sequence proved to be a complex problem. These issues were resolved in part thanks to the help of two other Bell Labs researchers, Harry Nyquist and Henrik Bode. Nyquist and Bode studied the behavior of the negative feedback amplifier, and created mathematical tools for analyzing it and determining the conditions under which it would be stable.

Taken together, these efforts turned Black’s invention into a mainstay of electronics. Within 25 years, thanks to the work of Black, Nyquist, Bode, and others, the principle of negative feedback was “applied almost universally to amplifiers used for any purpose,” and it continues to be used in the design of modern amplifiers.

The effects of the Bell Labs work on negative feedback resonated far beyond amplification. A parallel tradition of feedback-based devices existed in mechanical engineering, in the design of things like governors and servomechanisms. During WWII, these two traditions began to merge into the modern discipline of control theory, which studies how to control a dynamic system using feedback. Feedback loops designed using control theory methods are the foundation of virtually every sort of automated system: aircraft autopilots, robotic arms, chemical plants, and the entire electrical grid all use feedback-based control loops, and the tools that Black, Nyquist, Bode, and others created to analyze the negative feedback amplifier are still used by control engineers around the world today.

The transistor

Even with negative feedback reducing their distortion, vacuum tubes were still far from ideal amplifiers. A vacuum tube is essentially a highly modified lightbulb, and has similar drawbacks as early lightbulbs: heating the filaments consumed a lot of power, and over time the tubes would burn out and need to be replaced. Mervin Kelly, a Bell Labs physicist who was promoted to director of research in 1936, wanted to replace the vacuum tube amplifiers and mechanical relays in the Bell System with solid-state devices, devices whose switching or amplification was done by way of electrons moving through a solid chunk of matter. Shortly after his promotion, Kelly began to hire physicists who were familiar with the then-novel physics of quantum mechanics, and could help better understand the behavior of solid matter. In 1938 Kelly created a group specifically devoted to solid-state research, and the team began working on building a solid-state amplifier using semiconductors: materials such as silicon, germanium, and copper oxide whose conductivity could be greatly varied. The group made progress — most notably, in 1939 Russell Ohl discovered that small amounts of impurities in silicon could drastically affect silicon’s conductivity when he accidentally created a photovoltaic cell in a silicon rod — but the work was soon disrupted by wartime priorities.

As the war progressed, Kelly recognized that wartime scientific and technological advances had the potential to upend the communications industry, and that if AT&T wanted to stay on top it needed to master the relevant sciences. He conceived of a new, major research program into solid-state physics that would be led by William Shockley, a physicist Bell Labs had hired in 1936 and who since 1938 had been researching solid-state devices.

During the war Shockley had worked on a variety of problems unrelated to semiconductors, including designing tactics for submarine warfare and training B-29 crews to use radar bombsights. But he also found time to work on a solid-state amplifier. In April of 1945, a few months before the end of the war, Shockley began to sketch out a device made from doped silicon (silicon with small amounts of impurities). Shockley hoped to use external electric fields to modify the conductivity of the silicon, amplifying the current flowing through it. His initial experiments, however, were met with failure.

A few months later, Kelly reorganized the research program at Bell Labs, creating a new, larger group wholly devoted to solid-state physics and led by Shockley. Among those who joined the new group were Walter Brattain, a physicist who had joined Bell Labs in 1929 and had previously studied copper oxide semiconductors, and John Bardeen, a new researcher from the Naval Ordnance Laboratory. Both were part of a subteam of the new solid-state physics group devoted specifically to the study of semiconductors.

Shortly after Bardeen joined, Shockley asked him to check Shockley’s calculations for the silicon amplifier in the hopes of learning why it hadn’t worked. Bardeen studied Shockley’s work, and eventually theorized that electrons might be getting “trapped” on the surface of the material, preventing the electric field from penetrating it and thus altering its conductivity. The semiconductor group began studying these “surface states,” and after nearly two years of experiments a breakthrough occurred.

Brattain had been studying surface states in silicon and germanium by shining light on the materials; if surface states existed, the light would knock away some electrons via the photovoltaic effect, “ripping holes in the semiconductor fabric.” Brattain discovered that not only was this electron disruption taking place, but that by varying the charge in an electrode above the surface of a piece of silicon, the strength of the photovoltaic effect could be varied significantly. The surface states themselves could be manipulated.

On November 21st, 1947 — a few days after Brattain’s discovery — Bardeen suggested using this ability to manipulate surface states to build an amplifier. They placed a sharp metal point onto the surface of a piece of doped silicon, and surrounded it with an electrolyte. By placing a small wire into the electrolyte and varying its voltage, they believed they could alter the conductivity of the silicon, and thus how much current flowed through it from the metal point.

The experiment worked: applying a voltage to the electrolyte boosted the current flowing through the metal point by about 10%. When Brattain rode home that night, he told the other members of his carpool that he’d “taken part in the most important experiment that I’d ever do in my life.”

Over the next several weeks, Bardeen and Brattain iterated on their new silicon amplifier. Initially its performance was poor, amplifying current only marginally, not amplifying voltage at all, and only functioning at very low electrical frequencies. But they eventually found that replacing the silicon with germanium allowed the device to amplify both current and voltage, and removing the electrolyte allowed it to amplify voltage over a wide range of frequencies.

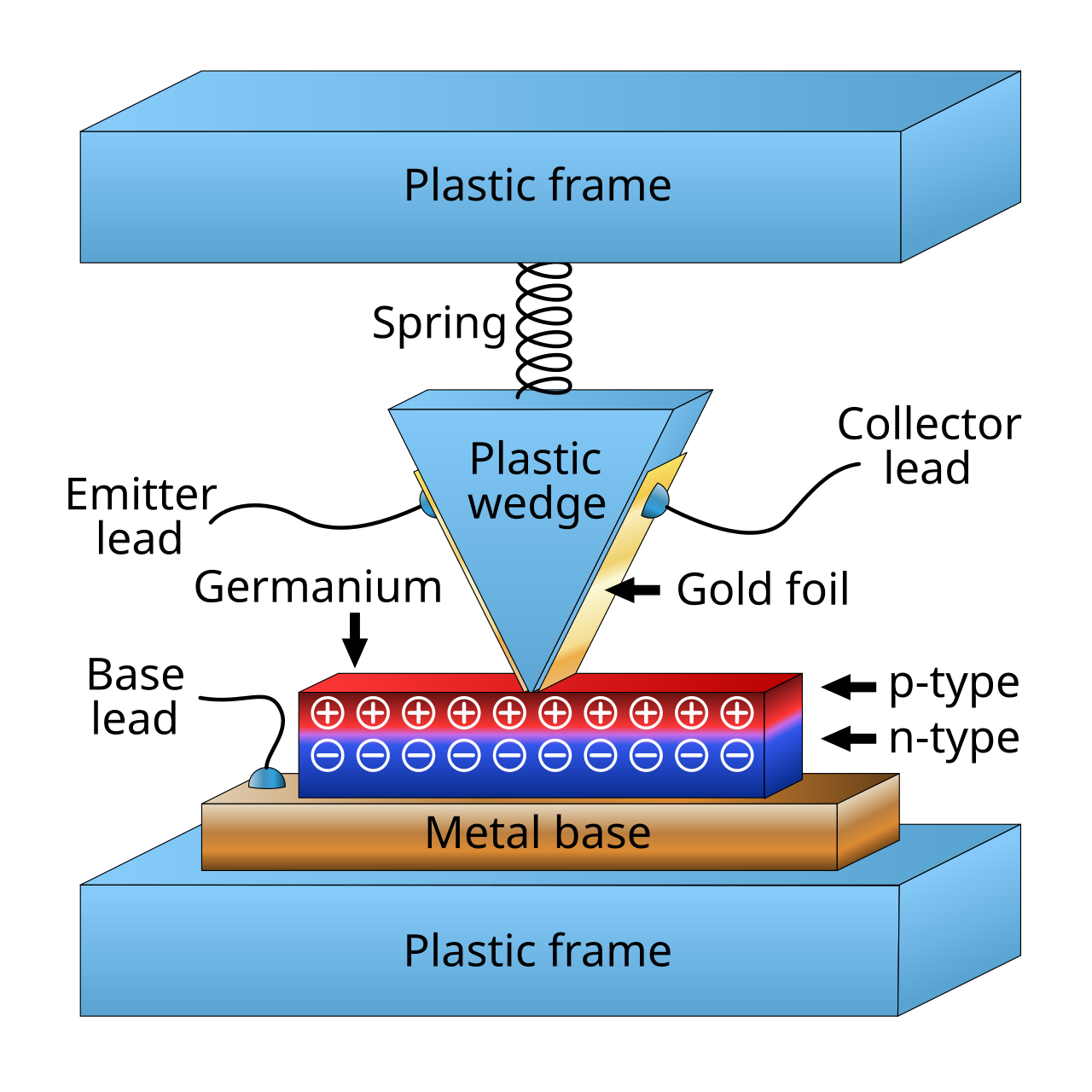

By the middle of December, Bardeen and Brattain were ready to apply what they had learned. They fashioned a new device consisting of two thin pieces of gold foil attached to the surface of a piece of doped germanium, separated by only a 500th of an inch. They connected one wire to each of the pieces of gold foil, and a third to the piece of germanium. They reasoned that varying the current in one of the gold foil wires should amplify the current flowing through the other gold wire.

It worked: both current and voltage could be amplified across a wide range of frequencies using the device. Bardeen and Brattain had fashioned a solid-state amplifier.

By early 1948, Bell Labs had fabricated nearly a hundred copies of Bardeen and Brattain’s amplifier (which would soon be named the transistor), and was testing how it could be used in various electronic devices. By the end of May, Bell Labs engineers had built a transistor-based telephone repeater. At a June 30th press conference, Bell Labs announced the transistor to the world:

We have called it the Transistor, T-R-A-N-S-I-S-T-O-R, because it is a resistor or semiconductor device which can amplify electrical signals as they are transferred through it from input to output terminals. It is, if you will, the electrical equivalent of a vacuum tube amplifier. But there the similarity ceases. It has no vacuum, no filament, no glass tube. It is composed entirely of cold, solid substances.

The rest, of course, is history.

Bell Labs worked out how to make the transistor more reliable, and by 1949 was manufacturing them by the thousands. William Shockley, irritated at not having taken part in Bardeen and Brattain’s discovery, worked to design an alternative semiconductor amplifier, the junction transistor. Shockley’s junction transistor, first successfully fabricated by Bell Labs physicists Gordon Teal and Morgan Sparks in 1950, was far more reliable than the point-contact transistor, and it would go on to be the most widely-used transistor until the MOSFET appeared in the 1960s. In 1956 Shockley, Bardeen, and Brattain would share the Nobel Prize in physics for the discovery of the “transistor effect,” by which time Shockley had left Bell Labs to found his own semiconductor company, Shockley Semiconductor Lab. In 1957, eight disgruntled employees would leave Shockley’s company to found Fairchild Semiconductor. Departing Fairchild employees in turn went on to found their own semiconductor companies, and the so-called “Fairchildren” (which include Intel and AMD) became the foundation of Silicon Valley. The world would never be the same.

The laser

As Shockley, Bardeen and Brattain were experimenting with semiconductors in the 1940s, another Bell Labs physicist, Charles Townes, was studying microwaves. Since the invention of radio in the late 19th century, the technology had progressed by steadily marching up the electromagnetic spectrum, finding ways to use shorter and shorter wavelengths. There were a variety of reasons for this: shorter wavelengths carried more information, there were limits to how many users could occupy a particular part of the electromagnetic spectrum, antennas for shorter wavelengths could be smaller, and radars with shorter wavelengths could resolve more detail. The shortest wavelengths in use in the 1920s were tens of meters in length, but by WWII this had fallen to centimeters in length; these were known as microwaves. During the war, at the behest of the military, Townes designed microwave radars of increasingly short wavelengths. When Townes designed a radar with a 10 centimeter wavelength, he was then asked to build a 3 centimeter one; when he built that, he was asked for a 1.25 centimeter wavelength.

But the pressure to achieve smaller and smaller wavelengths was bumping up against technological limitations. Generating and amplifying smaller and smaller wavelengths required smaller and smaller components; by the time wavelengths reached the millimeter range, the components to generate the signals became so small that manufacturing them, and putting enough power through them, became impractical. What was needed was a new way to generate and amplify radio signals that didn’t require fabricating microscopic components.

In 1948 Townes left Bell Labs for Columbia University, where he pursued research related to microwave spectroscopy. Townes hoped to use microwaves of increasingly small wavelengths, on the order of millimeters, to study the molecules and atomic nuclei. Because of his interest and expertise, and because shorter-wavelength radiation might prove valuable for radars or other military uses, Townes was asked by the Navy in 1950 to form an advisory group on millimeter wave radiation, so the Navy could keep abreast of any promising developments in the field. As there was not yet any good method of generating millimeter waves, most of the group’s efforts were focused on thinking of ways to generate them.

In April of 1951, on the morning of a day-long meeting of the committee, Townes awoke early and sat on a bench in a nearby park, and began to consider ways to generate millimeter wave radiation. Certain molecules, Townes knew, radiated energy at microwave wavelengths: perhaps instead of small electronic components, he could use molecules to generate the short millimeter waves he and everyone else were looking for?

The idea was not initially very promising. Molecules would certainly radiate after absorbing energy, but they would necessarily emit less energy than they absorbed — you couldn’t simply shine a light or send a signal through a collection of molecules, and get a stronger signal back. Heated molecules would radiate energy, but to generate microwaves they would need to be so hot that they would break apart into individual atoms.

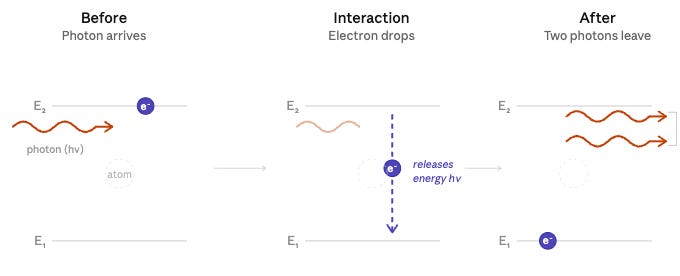

Townes did know of one way to get a molecule to boost an electromagnetic signal: stimulated emission. Normally, when an atom or molecule is struck by a photon, its energy level will be raised. Later, when it falls back to its normal state, it will emit a photon. However, if it’s struck by a photon while it’s already in an elevated energy state, it will emit a second photon of the exact same frequency: one photon becomes two.

Most molecules are usually in low-energy states, not high energy states, which means that any amplification would quickly peter out as excess photons were absorbed. But Townes realized that if a collection of molecules could be coaxed so that most of them were in high energy states — known as a “population inversion” — a cascade of stimulated emission could take place. One photon striking a high energy molecule would become two; each of those could strike another high energy molecule, becoming four, then becoming eight, then becoming 16. A small amount of electromagnetic radiation could be amplified enormously. With enough molecules in an elevated state, Townes realized that in principle there would be “no limit to the amount of energy obtainable.”

Sitting in the park, Townes took an envelope from his pocket, and worked through how such a device might work. By feeding a stream of appropriately stimulated molecules into a resonator (a cavity that would reflect electromagnetic radiation of the appropriate wavelength), any electromagnetic radiation generated within the resonator would bounce back and forth, gaining energy each time it passed through the molecules as more and more photons were generated. The device would amplify any incoming signal as an electromagnetic wave passed through the population inversion molecules. And because the resonator would reflect the wave back and forth through the molecules (a form of feedback), it could also act as an oscillator — a generator of electromagnetic signals. Power would only be limited by how quickly molecules carried energy into the resonator.

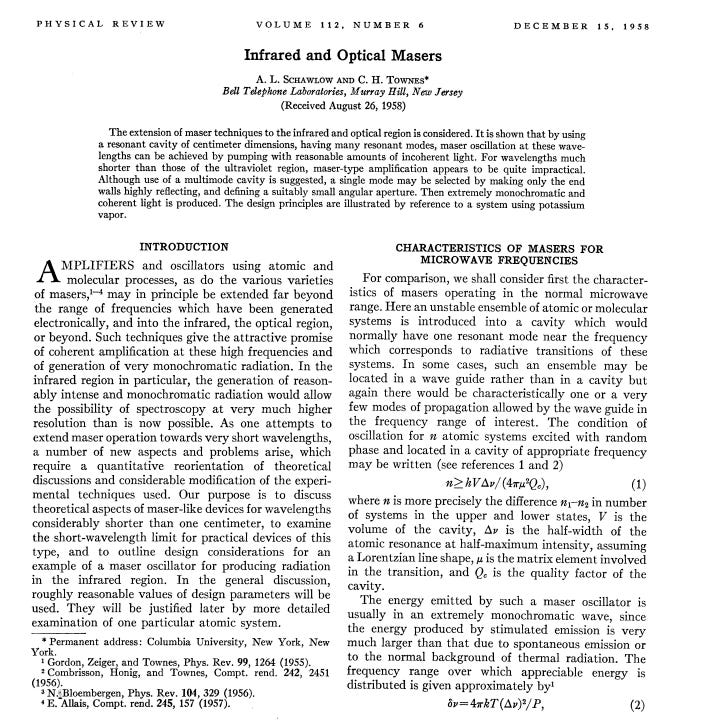

A few months later Townes assigned Jim Gordon, one of his graduate students, the task of building the device, assisted by another graduate student, Herb Zeiger. Over the next several years Gordon worked diligently to realize the ambitious concept. Many physicists considered the idea unpromising: at one point, several years into the project, the current and former heads of Townes’s department, Isidor Rabi and Polykarp Kusch, exasperatedly stated to Townes that “you should stop the work you are doing. It isn’t going to work. You know it’s not going to work. We know it’s not going to work. You’re wasting money. Just stop!” But finally in 1954, a few months after Kusch insisted the device wouldn’t work, Gordon succeeded, demonstrating both amplification and oscillation in a collection of stimulated ammonia molecules. They called their device the maser, an acronym describing the device’s Microwave Amplification by Stimulated Emission of Radiation.

The maser, once developed, eventually proved to be “the world’s most sensitive radio amplifier,” an order of magnitude more sensitive than existing microwave amplifiers. Bell Labs, which hired Gordon in 1955, used masers to amplify the signals from its Echo and Telstar satellites launched in the early 1960s. The maser also found use in radio telescopes for astronomy. But the maser had drawbacks — most notably, it had to be cooled to a few degrees above absolute zero — and it was gradually relegated to increasingly niche uses as other low-noise amplifiers were developed.

The biggest impact of the maser was likely the invention that it inspired. In 1957, Charles Townes, still attuned to the problem of generating shorter and shorter electromagnetic waves, began considering how the maser might be adapted to such a task. Historically, radio had advanced into shorter wavelengths gradually, one step at a time. But Townes realized that with the maser it might be as easy, or perhaps even easier, to skip from microwave wavelengths (roughly 1 meter to 1 millimeter) all the way down to infrared or even optical wavelengths (roughly 0.000000380 to 0.000000750 meters). By this time, Townes had re-joined Bell Labs as a part-time consultant, and Townes and another Bell Labs physicist, Art Schawlow, worked through the physics of what they referred to as an “optical maser.” In 1958, they published a paper outlining the idea.

After Townes and Schawlow’s paper was published, the race was on to build the optical maser, which soon began to be referred to as a laser, for Light Amplification by Stimulated Emission of Radiation). The first successful laser was built by Theodore Maiman at Hughes Aircraft in 1960 using a ruby crystal, but other successes quickly followed. It was soon discovered that a wide variety of materials could be made to “lase,” and before long there were lasers made from gasses, dye, glass, semiconductors, and more. In 1964, Townes would receive the Nobel Prize in physics for his work on the “maser-laser principle.”

While the laser could amplify signals that were passed into it, it proved much more useful as an oscillator, a generator of original signals. In 1959 Art Schawlow, believing that the laser would be more useful as an oscillator than an amplifier, jokingly suggested that it be renamed the LOSER. Unlike the maser, which was limited to niche uses, the laser proved to be useful for a wide variety of tasks. Within just two years of Maiman’s demonstration, a laser was used for eye surgery. By 1968, the US Air Force was dropping laser-guided bombs in combat. By 1971, Xerox PARC researchers built the first laser printer, and in 1974 the first laser-based barcode scanners were being installed. In 1980, the first commercial fiber optics lines using semiconductor lasers were made available. It’s the lasers ability to generate coherent light — light all of the same frequency, and in the same phase — that made it possible to continue to advance up the electromagnetic spectrum, and use optical wavelength electromagnetic radiation for communication.

Conclusion

Why did these various amplifiers, so many of them deriving from work at Bell Labs, end up being so valuable?

Partly it’s because an electronic amplifier is a very useful information processing device. An amplifier can amplify an electromagnetic signal, but it can also act as an electronic switch. And if the output of an amplifier is fed back into its input, an amplifier can also act as an oscillator: a generator of electromagnetic signals. Those are all useful information processing tasks, and each time a new, better amplifier was created, it extended the kinds of information processing that could be done. So these amplifiers were valuable because electronic information processing is valuable, and these amplifiers all greatly expanded the scope of information processing.

But a broader, somewhat more abstract reason is that many important technologies often act as amplifiers in one way or another. One of the most important inventions in biology, for instance, is the polymerase chain reaction, or PCR. PCR is essentially a DNA amplifier: it makes millions of copies of DNA sequences, making it far easier to study them and enabling things like the dramatic reduction in the price of genetic sequencing. The inventor of PCR, Kary Mullis, shared the 1993 Nobel Prize in Chemistry for his invention.

Examples of important amplifiers abound, because amplifiers themselves help produce abundance. Chemical catalysts, which can be thought of as amplifiers of chemical reactions, are used in everything from catalytic converters in cars to petroleum manufacturing. Simple machines like the lever or pulley amplify force, microscopes and telescopes amplify visual details. Industrial fermentation, nuclear reactors, the printing press, the Xerox machine, fractional reserve banking — all can be thought of as a type of amplifier.

So these four amplifiers were important in part because amplifiers in general are important. Amplifiers take something useful — an electromagnetic signal, a segment of DNA, a copy of a book — and make it possible to get a lot more of it, and technologies that do that are often particularly useful themselves.

For simplicity’s sake, I’m considering research done at AT&T before Bell Labs was formally incorporated as being done by Bell Labs.

I met Art Schawlow when we both working on help for families with autistic processes. Mine was high functioning and his was institutionalized because of his violent behavior. A Nobel Prize will not compensate for a damaged child.

A minor nit, but one which keeps ahistorical narratives alive, the MOSFET did not become more important than the junction transistor until the 1980s. It was invented 100 years ago but languished unrecognized, independently re-invented in the 50s, but practical uses were mainly niche, running slowly at high voltage and cost. Even its use in microcomputers in the 1970s was still niche, as either NMOS or PMOS alone had dismal performance. Performance improved with clever circuits, but it did not really break through until CMOS LSI processes were practical, and those grandchildren of the original idea are the ones we see today. Those ideal switches were initially developed at IBM, ironically late to realize their essential importance in products.

In the 1960s referenced in the text MOS was essentially unknown outside labs.